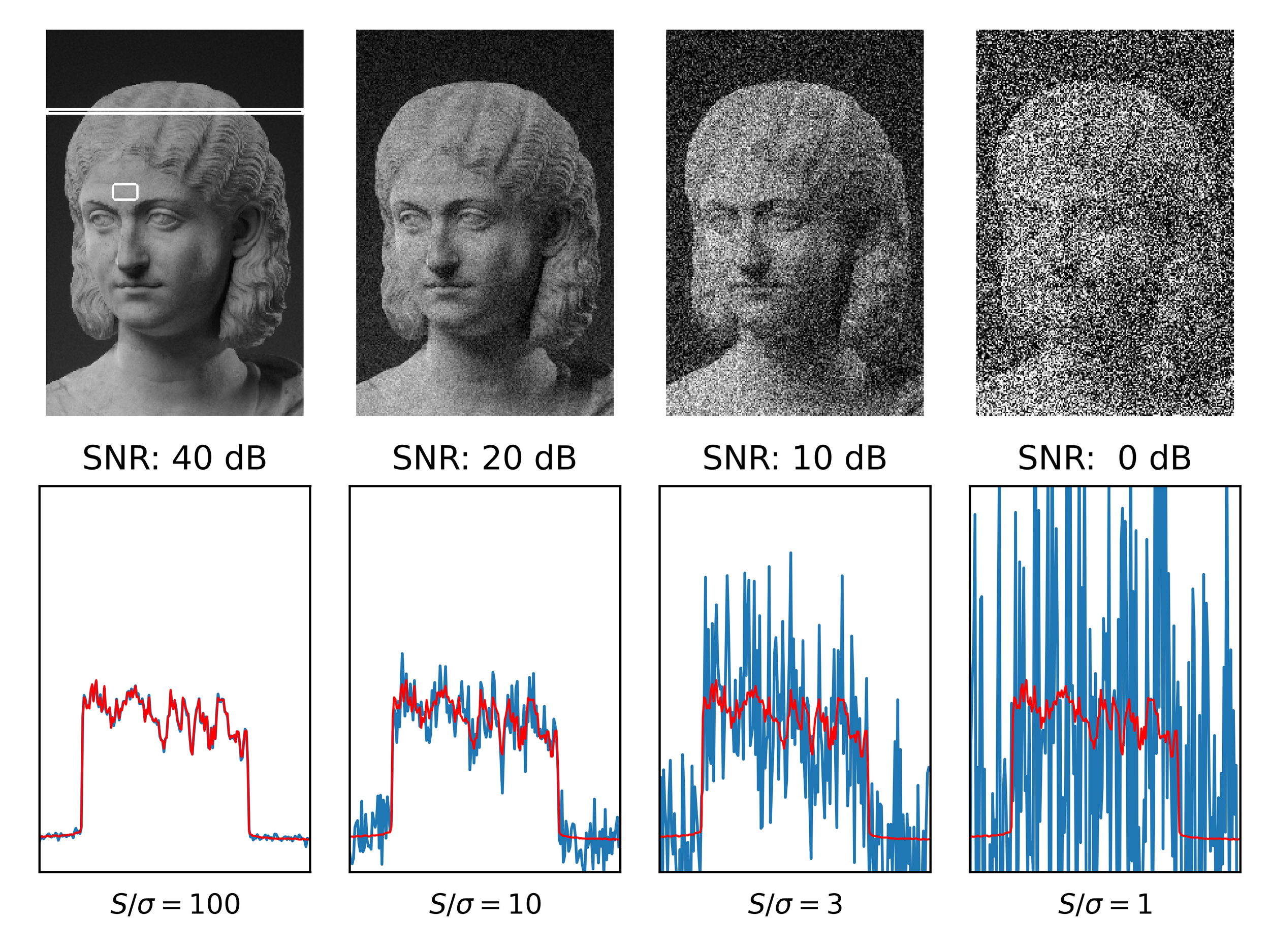

The Noise Floor

There's a concept in signal processing called the noise floor — the ambient level of unwanted signal below which useful information can't be recovered. Raise the noise floor enough and the signal doesn't just get harder to find. It disappears.

That's what's happening to hiring right now.

I lead teams of talented folks who build AI-powered software. So I spend a lot of time thinking about what AI is doing to the people who would use software like ours — and the people who would build it. The picture is more complicated than the headlines suggest, and considerably more unsettling than the official line.

The Official Line Has a Gap

Anthropic — whose technology I depend on — recently published research on AI's labor market impacts. The findings are careful and worth reading: broad, catastrophic displacement isn't measurable yet. I find that plausible.

I also find it incomplete. Because the data is lagging the reality, and the reality is showing up in places like Block.

In March 2026, Block issued a direct warning to its workforce: AI performance improvements have made some current headcount indefensible. RIF — reduction in force — is back in the active vocabulary of working professionals. That's not a data point in a research paper. That's a staffing decision in a boardroom.

These aren't isolated signals. They're the early visible indicators of a constraint shift that's been building quietly for two years.

The Rung Got Removed

Here's the part that I find genuinely uncomfortable to sit with: the jobs AI is absorbing fastest are the ones at the bottom of the ladder.

Code reviews. QA passes. First-draft documentation. Junior research. Data entry with judgment attached. These aren't jobs that will be transformed and enriched over time. They're being redistributed — to AI — right now. The people most dependent on that rung are younger workers. The workers who were supposed to climb their way in.

The rung is being removed while they're still climbing.

This matters beyond any individual career. Entry-level roles were never just jobs. They were how organizations identified the next generation of senior engineers, product leaders, and executives. We are now selectively defunding that pipeline. The downstream consequences aren't fully priced in yet.

The Doors Stopped Opening

What I hear from people who used to move adroitly — from role to role, company to company, their résumé as currency — is that something has changed. The doors aren't swinging open the way they used to.

Some of that is cyclical. Some of it is macroeconomic. And some of it is what happens when a large, newly displaced population of technically skilled people all arrive at the same conclusion at roughly the same time: if I can't outrun the AI, I'll use it to compete.

The result is a flood.

Open a mid-level engineering role at a recognizable company today and expect hundreds — sometimes thousands — of applications within 48 hours. Most will be formatted correctly. The language will be polished. Keywords will align with the job description with unusual precision. Because they were generated to align with it.

AI-assisted job applications aren't inherently dishonest. Using AI to improve a résumé isn't fundamentally different from working with a career coach. But when every application is AI-optimized, the signal flattens. You're no longer reading a résumé. You're reading a template rendered in someone's approximate voice.

That's the charitable interpretation.

It Gets Darker

Microsoft's threat intelligence unit published a finding this week that's worth sitting with longer than most people will.

North Korean state-backed operatives are using AI — voice-changing software, face-swap tools, AI-generated identity documents — to successfully impersonate western software developers. They're getting hired for remote IT roles. Once inside, wages go back to Pyongyang. They use AI to do the actual work. They threaten to release sensitive company data if discovered.

Last year alone, Microsoft disrupted over 3,000 fake accounts tied to these operations.

This is not a fringe phenomenon. It is a scaled, state-sponsored industrial operation — and it is running on the same AI-assisted job application infrastructure that everyone else is using. The technical gap between a polished AI résumé and a fabricated identity designed to defeat ATS screening is narrower than most hiring teams want to acknowledge. The Microsoft report details AI tools used to generate culturally appropriate name lists, manufacture matching email formats, scan job postings for keywords, and keep hired fraudsters employed long enough to extract value.

The hiring signal isn't just flattened. In some cases, it's been weaponized against you.

The Arms Race You Didn't Sign Up For

The obvious response — use AI to screen for AI — runs into a problem immediately.

The same tools generating fraudulent applications can be tuned to defeat the filters. You're in an arms race with adversaries who have considerably more time to dedicate to it than your recruiting team does. The ATS vendors are selling you filters. The people gaming those filters have already read the vendor documentation.

There's also a deeper problem: AI filtering for AI doesn't solve the signal problem, it relocates it. You're still not finding out whether the person who cleared your screen can actually do the job, make sound decisions under ambiguity, and be accountable for outcomes. You've just moved the noise floor up a level.

What Actually Works

Some organizations are figuring this out. Tolan — and the irony that a company building an AI companion app to help people feel grounded and connected now requires in-person interviews is genuinely not lost on me — completely redesigned their engineering hiring loop.

Rather than filter by résumé, they give candidates a real product specification and a few hours to build something using whatever tools they'd use on the actual job. AI tools included. The question isn't whether you used AI. It's what you did with it.

They're evaluating judgment in the presence of AI — not the absence of it. Can you architect a solution? Can you question unclear requirements? Can you recognize when the output needs work? Can you own the code the machine wrote?

Presence matters again. Not because remote work doesn't work, but because the verification layer is compromised. Video screening — with attention to the tells Microsoft identified, including pixelation at face edges, inconsistent lighting, and unnatural eye behavior — is becoming a legitimate first filter. Not because most applicants are fraudulent. Because the ones who are have gotten very good at being indistinguishable on paper.

Both Sides of the Table

I want to be useful here to both parties. The same signals that tell a hiring manager they're looking at a real engineer are the signals a job seeker should be deliberately producing. None of this is complicated. Most of it isn't being done.

Document the "why," not just the "what." AI generates task lists well. It does not generate trade-off reasoning. The difference between an AI-polished résumé and a human one isn't vocabulary — it's the presence of constraints and the absence of perfect confidence.

A purely AI-generated project description reads: "Built a microservices architecture using Go and Kafka to improve scalability." That's a features list. A human who actually did that work writes: "Migrated from a monolith to Go microservices. Chose Kafka over RabbitMQ specifically to handle 10x spikes in event-logging during peak traffic, accepting the increased operational complexity for better data persistence." Same outcome. Completely different signal. One describes what was built. The other explains why — and names the trade-off that was accepted. AI doesn't make trade-offs. You do.

Produce proof that can't be generated retroactively. A GitHub link is no longer sufficient verification. The code itself might be AI-generated — and increasingly, it is. Recruiters are now looking for the paper trail of how you think under pressure. Link to specific pull requests where you engaged in a real technical debate. How you respond to a "nit" or a refactor request is a high-signal human trait. Include a post-mortem or lessons-learned section for any significant project — not the polished version, but the honest one that details how you diagnosed a production incident, what investigative path you took, and what you'd do differently. Generic AI can't write that section because generic AI wasn't there.

Reframe from coder to orchestrator. The job description that says "must be proficient in Python" is already describing a commodity. What isn't a commodity is knowing when not to trust the output — and being able to explain why. Show that you govern the tools rather than simply operate them: "Reduced feature delivery time by 30% using AI for unit test generation and boilerplate, while conducting manual security audits on all LLM-suggested code." That sentence does two things. It demonstrates AI fluency. And it demonstrates that you hold a quality bar the AI is accountable to, not the other way around. That's the distinction top teams are actually hiring for right now.

Eliminate the AI fingerprints. This one is deceptively simple and almost nobody does it: delete every word a language model would reach for first. "Leverages." "Cutting-edge." "Results-oriented." "Passionate about." These phrases are invisible to keyword filters and immediately visible to a tired human recruiter who has read 200 applications that all sound identical. They signal that no one was actually driving. Use your natural voice. And for every major achievement, add one constraint: "Optimized database queries... within a legacy PHP environment with no existing documentation." Constraints prove you were there. AI-generated résumés almost never include them, because they make the work sound harder. That's exactly why they work.

| AI-Generated (The Sea) | Human Standout (The Signal) | |

|---|---|---|

| Language | Polished, generic, "perfect" | Specific, technical, "messy" details |

| Focus | Results and buzzwords | Decisions and trade-offs |

| Projects | List of features | Narrative of challenges and fixes |

| Skills | Everything (shallow) | Deep expertise + AI governance |

| Constraints | Absent | Named explicitly |

The Signal That Remains

I've written before about specification clarity — the ability to write a prompt or spec that produces correct, production-ready output on the first pass — as the defining engineering skill of the next five years. This hiring crisis forces a broader version of that idea.

What can't be faked?

Judgment. Taste. The willingness to name the constraint you worked inside and the trade-off you accepted. The ability to say "the AI got this wrong, and here's exactly how I know." None of that appears in a polished résumé built to clear a keyword filter. All of it appears in a post-mortem, a PR thread, a two-hour working session with real stakes.

For hiring managers: stop trying to filter AI out of the process. Start designing evaluations that require the kind of reasoning AI cannot produce on someone's behalf — yet. For job seekers: the signal is still there to send. You just have to actually send it.

The organizations and the candidates that navigate this well won't be the ones with the most sophisticated tools. They'll be the ones willing to do the harder work: building surfaces for evaluation that the noise floor hasn't reached.

Most haven't done that yet.

The noise floor isn't going down. You can't control that.

You can control your signal.

Raise your SNR.

This is part of an ongoing series on how AI is reshaping the landscape for organizations and the people inside them.

Sources referenced:

- Anthropic — Economic and Labor Market Impacts of Claude: https://www.anthropic.com/research/labor-market-impacts

- Business Insider — Block Layoffs and AI Warning (March 2026): https://www.businessinsider.com/most-secure-job-in-tech-block-layoffs-ai-warning-2026-3

- The Guardian / Microsoft — North Korean Agents Using AI in Hiring Fraud (March 2026): https://www.theguardian.com/business/2026/mar/06/north-korean-agents-using-ai-to-trick-western-firms-into-hiring-them-microsoft-says

- Tolan — How We Hire Engineers When AI Writes Our Code: https://tolans.com/relay/how-we-hire-engineers-when-ai-writes-our-code